There's a well-established pattern in B2B sales: the first vendor to respond to a quote request has a significant advantage over those who respond later. This isn't controversial. Most salespeople accept it intuitively. And yet, most sales teams have no systematic way of knowing how fast they actually respond—or whether their response time is getting better or worse over time.

That gap between knowing speed matters and actually measuring it is where deals are lost.

Why Response Time Is Hard to Measure Without Infrastructure

Measuring response time sounds simple: record when the request arrived, record when you responded, subtract. But in practice, a few things complicate this:

Multiple channels. Quote requests arrive by email from different addresses, through different mail accounts, sometimes forwarded internally. Without a unified intake point, you're measuring across scattered threads.

Unstructured ownership. If no one is explicitly assigned to a request, there's no clear event to compare against the arrival time. You'd have to manually audit your sent mail folder.

Business hours. A request that arrives at 11pm Friday night and is answered at 8am Monday wasn't really sitting unanswered for 57 hours—it was answered first thing on Monday. Any meaningful response time metric needs to account for actual working hours.

Volume variation. A team that answers 5 requests a day and one that answers 50 will have very different response time profiles, and average metrics can be misleading during high-volume periods.

Getting an accurate picture requires infrastructure that handles all of this automatically.

The Accountability Gap

Here's a question most sales managers can't confidently answer: which team member has the longest average response time right now?

This isn't a gotcha question. It's useful information. If one person consistently takes twice as long to respond as their colleagues, there might be a workload problem, a prioritization problem, or a skill gap that coaching could address. Without the data, you can't have that conversation.

Response time accountability means being able to look at each person's handling time, not just the team average. It means being able to say "requests you claimed took an average of 4.5 business hours to get a first response this month" and have that be a factual statement, not an estimate.

Teams that have this data make better decisions about workload distribution, staffing, and training. Teams that don't are managing by feel.

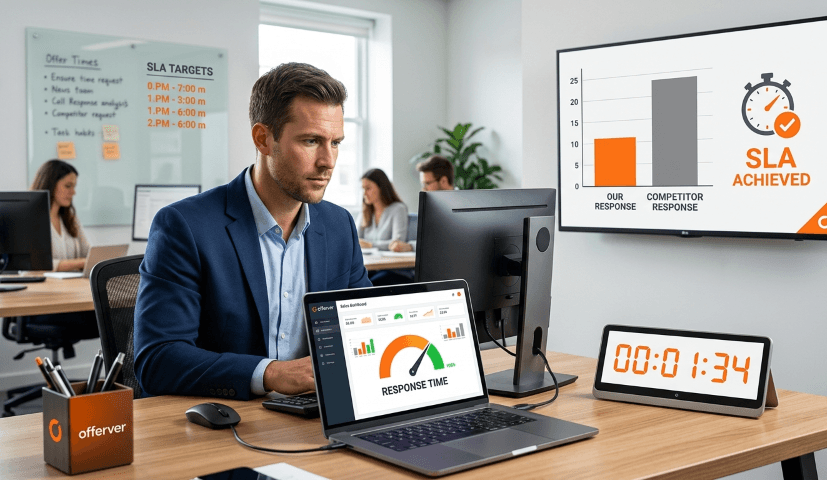

SLA as a Team Commitment

One concrete way to build accountability into your process is to define a response time target—what some teams call a service level agreement (SLA)—and track compliance against it.

An SLA for quote requests might look like: "Every new request should be claimed within 2 business hours of arrival and receive a response within 24 business hours." The specific numbers matter less than the fact that they're explicit and measured.

When you have an SLA, several things change:

Escalation becomes possible. If a request approaches the deadline without activity, it can trigger an automatic alert. The team knows something needs attention before a deadline passes silently.

Performance review becomes concrete. Instead of "we've been a bit slow lately," you can say "our SLA compliance was 78% last month, down from 91% the month before." That's a conversation starter with teeth.

Improvement is measurable. When you make a process change—redistribute workload, add a team member, change the intake process—you can see whether it actually moved the needle on response time, not just whether people felt like it did.

Making It Automatic

Tracking response times manually isn't sustainable. It adds administrative overhead and tends to get deprioritized as soon as the team gets busy—which is exactly when the data would be most useful.

The right approach is to instrument your quote request workflow so that timestamps are recorded automatically at each stage: when the request arrived, when someone claimed it, when a response was sent, when the request was resolved. The data should accumulate in the background without anyone having to think about it.

From there, reporting can be on-demand: daily, weekly, or rolled up into a monthly review. The goal is to make the question "how fast are we responding?" answerable in seconds, not after a manual audit.

Response time isn't just a courtesy metric. For B2B teams competing on service as much as product, it's a direct predictor of win rate. Making it measurable—and making individuals accountable for it—is one of the highest-leverage improvements a sales team can make.